With both Russia and Ukraine facing a shortage of manpower to fight on the battlefields, their war is witnessing a relatively new phenomenon of “crude ground robots” playing the roles of soldiers.

Last week, a Kremlin-affiliated social media published a clip purporting to show a Russian unmanned ground vehicle, or UGV, delivering supplies to front-line troops while avoiding strikes by Ukrainian mini-drones and transporting a wounded soldier. However, the evacuation is never clearly shown.

Russian Tracked UGV Transports Supplies, Wounded; Comes Under Fire from Ukrainian FPV Kamikaze Drones: Video of a small-sized transport unmanned tracked platform used in the Avdeevsky direction by the Russian side. It is said that “in the battles for the Avdeevsk industrial zone,… pic.twitter.com/RAxhyjPZ0P

— EurAsian Times (@THEEURASIATIMES) December 5, 2023

According to Sam Bendett, research analyst at the US-based Center for Naval Analyses think tank, as armed aerial drones and artillery are threatening troop movements on the frontlines in Ukraine, regular tasks like logistics, supply, and evacuation are in danger of getting discovered and attacked. In response, Ukrainian and Russian forces are fielding “simple, DIY platforms” for such tasks, Bendett points out.

Though, officially, they do not admit it, it is becoming increasingly apparent that neither Russia nor Ukraine is finding enough volunteers to join the armed forces. Moscow is doing everything possible to mobilize people and lure them into signing contracts with the military.

Russia’s annual autumn conscription draft is said to have kicked off in October, pulling in some 130,000 fresh young men. Though Moscow says conscripts won’t be sent to Ukraine, after a year of service, they automatically become reservists — prime candidates for mobilization.

The same is the case with Ukraine. The country constantly needs to replace the tens of thousands who’ve been killed or injured. But many Ukrainian men simply do not want to fight. As the BBC has reported, thousands have left the country, sometimes after bribing officials, and others are finding ways of dodging recruitment officers, who, in turn, have been accused of increasingly heavy-handed tactics.

So much so that President Volodymyr Zelensky has sacked every regional head of recruitment in Ukraine after widespread allegations against officers in the system, including bribe-taking and intimidation.

Against this background, the role of artificial intelligence (AI) and autonomous systems in the war in Ukraine is attracting global attention. Reportedly, the IRONCLAD, a state-of-the-art unmanned robot, is currently undergoing testing on the front lines by the Ukrainian Armed Forces.

This advanced machine is supposed to possess many capabilities; “it can support assaults on opposition placements, carry out reconnaissance missions, and provide crucial fire support to troops on the field.” It can significantly reduce the risk to soldiers’ lives by enabling remote operations.

According to Ukraine’s Minister of Digital Transformation, Mykhailo Fedorov, “the robot(Ironclad) can reach speeds of up to 20 kilometers per hour. It features a thermal imaging camera fitted with the ShaBlya M2 combat turret. Additionally, its armored shell offers robust protection against small arms. IRONCLAD’s operations can be managed remotely, which allows the control station to be positioned at a safe distance.”

It may be noted that IRONCLAD may not be the pioneer robot to undergo testing on the Ukrainian battlefield. It is reported that nearly a year ago, Ukraine divulged the successful trial of “TheEMIS,” an innovative robot from Estonia. This mechanical marvel supposedly serves as a vehicle for transporting cargo and wounded soldiers on the battlefield.

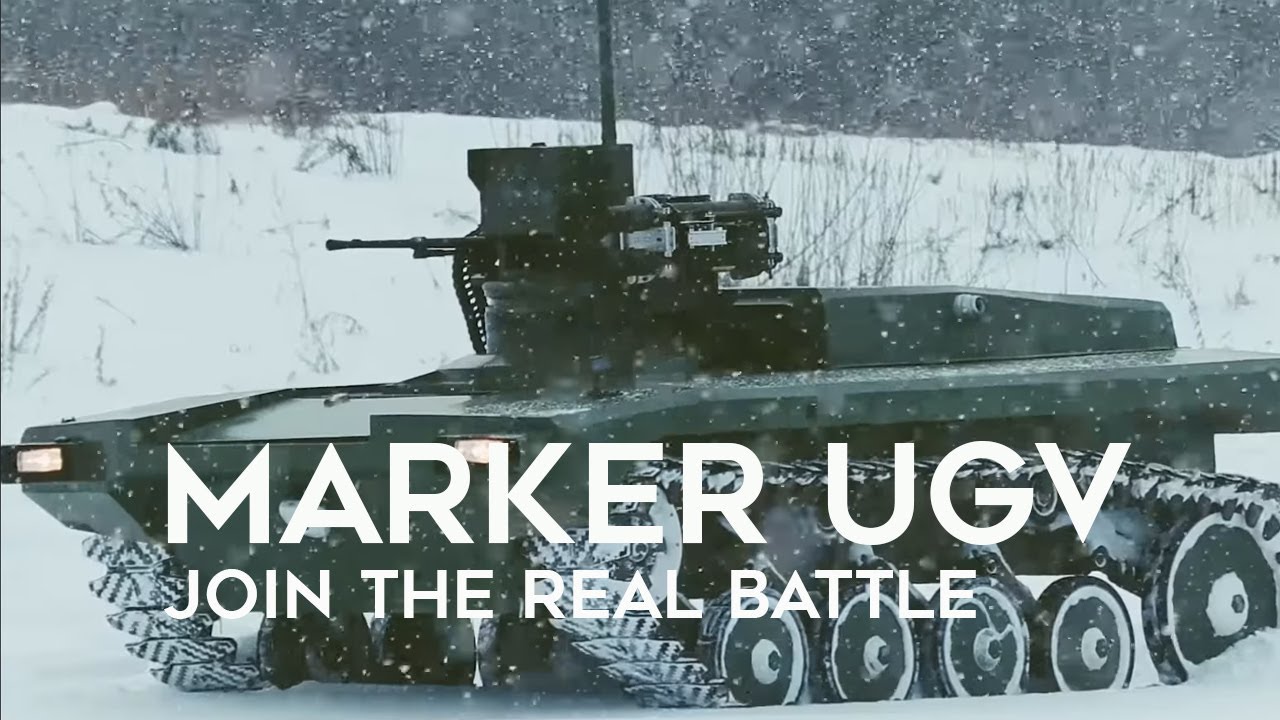

Likewise, Russia is said to be conducting battlefield tests with robots in Ukraine, explicitly deploying the Zubilo robot to the combat zone. It is a 13.3-ton assault ground vehicle designed to function as an unmanned transport system capable of carrying a payload of up to 2.7 tons.

With the capability to withstand the impact of shrapnel from explosive devices and artillery fragments, Zubilo boasts several other utilities, including ammunition delivery, cargo transportation, casualty evacuation, and even providing power for radios and quadcopters.

When all this is seen from a broader perspective, it may be said the war in Ukraine is proving to be a test case of AI in multiple dimensions. Both Russia and Ukraine have used AI to enhance disinformation campaigns and employ drones in an unprecedented manner to collect intelligence, carry out strikes, and process enemy battlefield communications in facial recognition technology, cyber defense, etc.

Drones, Robots, and other AI manifestations in the war in Ukraine show not only how new technologies are shaping the battlefield in real-time but also highlight a longer-term trend of militaries worldwide accelerating investment into research and development of autonomous technologies.

Admittedly, progress in these fields promises to reduce risk to deployed forces, minimize the cognitive and physical burden on warfighters, and significantly increase the speed of information processing, decision-making, and operations, among other advantages.

At the same time, experts caution how such technological breakthroughs and the use of these applications and systems in contested environments may also come with risks and costs—from ethical concerns about responsibility for lethal force to unexpected behavior of brittle and opaque systems.

“This is as much a philosophical question as a technological one. From a technology perspective, we absolutely can get there. From a philosophical point of view and how much we trust the underlying machines, I think that can still be an open discussion.

“So a defensive system that intercepts drones, if it has a surface-to-air missile component, that’s still lethal force it misfires or misidentified. I think we have to be very cautious in how we deem defensive systems non-lethal systems if there is the possibility that they misfire, as a defensive system could still lead to death.

“When it comes to defensive applications, there’s a strong case to be made there……but we need to look at what action is being applied to those defensive systems before we go too out of the loop,” Ross Rustici, former East Asia Cyber Security Lead, US Department of Defense, said in a recent interview.

Rustici has a point when he says that in the case of ‘“jamming” or some non-kinetic countermeasure that would not injure or harm people if it misfired or malfunctioned, it is much more efficient to use AI and computer automation. But, in the case of lethal force, there are still many reliability questions regarding fully “trusting” AI-enabled machines to make determinations.

“The things that I want to see going forward is having some more built-in error handling so that when a sensor is degraded, and there are questions about the reliability of the information, you have that visibility as a human to make the decision. Right now, there is a risk of having corrupted, undermined, or incomplete data being fed to a person who is used to overly relying on those systems.

Errors can still be introduced into the system that way. I think it’s correct to keep that human-machine interface separated and have the human be a little skeptical of the technology to ensure mistakes don’t happen to the best of our ability,” Rustici explains.

No wonder the campaign to “Stop Killer Robots” is calling for a pre-emptive ban on “fully autonomous” weapons and is gaining momentum. Howsoever sophisticated the machine may be, the human being must remain its master, it is argued.

Therefore, It is understandable why there is a call for a need for “manned-unmanned teaming” or “human-machine interface.”

- Author and veteran journalist Prakash Nanda is Chairman of the Editorial Board – EurAsian Times and has commented on politics, foreign policy, and strategic affairs for nearly three decades. A former National Fellow of the Indian Council for Historical Research and recipient of the Seoul Peace Prize Scholarship, he is also a Distinguished Fellow at the Institute of Peace and Conflict Studies.

- CONTACT: prakash.nanda (at) hotmail.com

- Follow EurAsian Times on Google News