In the latest application of AI in space, Chinese researchers claim to have developed an artificial intelligence (AI) system that appears to have learned the art of deception in a simulated space battle, Hong Kong-based South China Morning Post reported.

Russia Says Its Warships Pounded American Arms Depot In Ukraine With Kalibr Cruise Missiles — Watch

As an experiment, the AI system directed three small satellites to ‘approach and capture’ high-value targets thousands of times. The report said that the targeted spacecraft learned to sense the impending threat and activated powerful thrusters to avoid the pursuit.

The AI instructed the three hunters to deviate from their original path as if abandoning the pursuit resulting in a trap for the satellites. Then, at a distance of fewer than 10 meters (33 feet), one of the hunting satellites abruptly reversed course and deployed a capturing device.

In a paper published on April 25 in the domestic peer-reviewed journal Aerospace Shanghai, lead scientist Dang Zhaohui, a professor of astronautics at Northwestern Polytechnical University in Xian, remarked, “This is spectacular.”

The team behind the system highlighted that pursuing a large target in orbit is not as simple as most people believe. The report said that most previous research regarded the pursuit as a mathematical optimization issue, with the target assumed to be slow, dumb, and blind.

Powerful nations defend their valuable space assets via surveillance networks and early warning sensors while employing artificial intelligence (AI) to secure satellites. The report also noted that attacking satellites, particularly early-warning satellites, is frequently considered a precursor to nuclear war.

Japan’s ‘Masterstroke’ Against China; Boosts Its Position In ASEAN & QUAD By Opening Its Defense Sector

Dang and his colleagues from the Shanghai Institute of Aerospace System Engineering claimed that humans would have no place in the new form of space war they envision, with AI controlling both the targets and the hunters.

How Did Chinese Researchers Conduct The Experiments?

The researchers allowed AI to continuously play the pursuit and evasion game without human interference. Later, an AI “critic” reviewed the outcome of each round, awarding awards and penalties.

For example, using more power, spending too much time, or colliding with a teammate resulted in fines for the hunters but bonuses for their target.

In the first 10,000 rounds of training, both teams struggled, with the total amount of penalties vastly outnumbering the rewards. After around 20,000 rounds, the hunting satellites learned faster, “probably because they worked as a group,” and gained an advantage, said, Dang.

Soon, the targeted satellite also learned to recognize the hunters’ simple tactics and improved her ability to evade being pursued. Following the repeated setbacks, the hunting AI changed the game by devising far more sophisticated techniques such as teamwork, planning, and deception, which considerably boosted the chances of a successful capture.

According to Dang’s team, “After more than 220,000 rounds of training, the target was left with no room for mistakes.” China has been integrating artificial intelligence into its satellites to become a significant power in space.

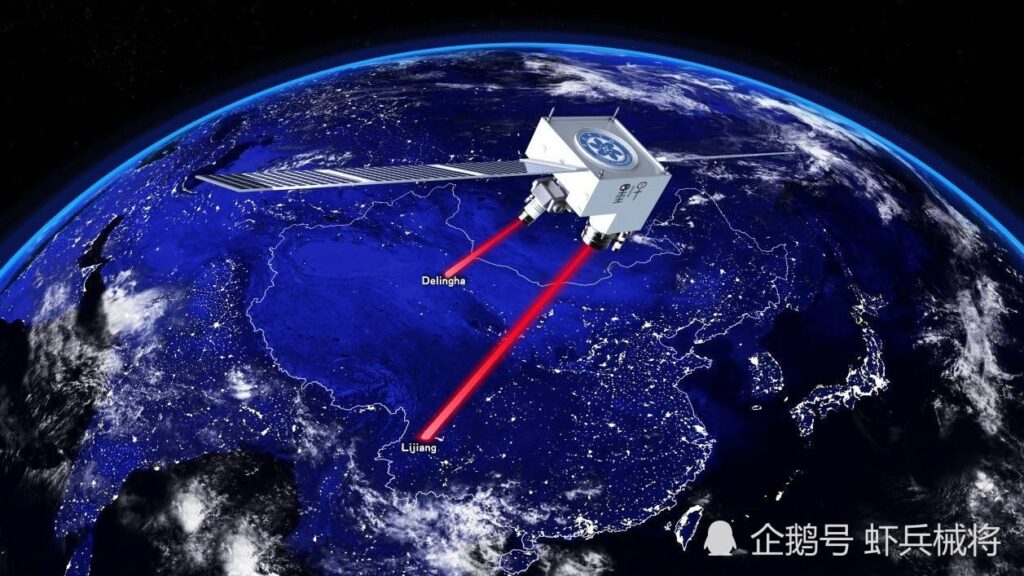

As previously reported by the EurAsian Times, the country recently unveiled an advanced artificial intelligence system that allows low-cost commercial imaging satellites to become effective spy platforms.

In June of last year, China demonstrated its AI-enhanced satellite reconnaissance capabilities when its Beijing-3 commercial satellite completed an in-depth scan of 3,800 square kilometers of San Francisco Bay in under 42 seconds from a 500-kilometer height.

Furthermore, China intends to send the entire constellation of 138 Jilin-1 satellites into orbit by 2025. Enhancing these satellites with onboard AI that significantly improves their imaging capabilities would strengthen their permanent monitoring capability and durability against the US and allied anti-satellite missiles.

However, some scientists have warned that artificial intelligence has made space more dangerous.

A Chinese paper published in May in the Journal of International Security Studies stated, “The application of artificial intelligence in space will have a disruptive impact on global strategic stability.”

AI has the potential to make anti-satellite tactics more accurate, damaging, and difficult to trace, raising the risk of a ‘pre-emptive’ strike by some countries.

“Attacking satellites, especially early-warning satellites, is often seen as a precursor to nuclear war,” she continued, urging the international community to adopt laws governing AI activity in space.

Last year, Two SpaceX Starlink satellites came way too close to China’s Tiangong space station, causing the government to take evasive action. Military analysts have since stated that if the satellites harm the country’s security, the country needs to have the capability to destroy or disable them.

China’s plans to embed military AI into commercial satellites may be interpreted as a proliferation strategy to improve the survival of its space-based intelligence, reconnaissance, and surveillance (ISR) capabilities.

- Contact the author at ashishmichel@gmail.com

- Follow EurAsian Times on Google News